Upload folder using huggingface_hub

Browse files- .gitattributes +3 -0

- README.md +171 -194

- chat_template.jinja +86 -0

- config.json +55 -0

- generation_config.json +11 -0

- model-00001-of-00012.safetensors +3 -0

- model-00002-of-00012.safetensors +3 -0

- model-00003-of-00012.safetensors +3 -0

- model-00004-of-00012.safetensors +3 -0

- model-00005-of-00012.safetensors +3 -0

- model-00006-of-00012.safetensors +3 -0

- model-00007-of-00012.safetensors +3 -0

- model-00008-of-00012.safetensors +3 -0

- model-00009-of-00012.safetensors +3 -0

- model-00010-of-00012.safetensors +3 -0

- model-00011-of-00012.safetensors +3 -0

- model-00012-of-00012.safetensors +3 -0

- model.safetensors.index.json +3 -0

- quantization_config.json +3 -0

- tokenizer.json +3 -0

- tokenizer_config.json +325 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,6 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

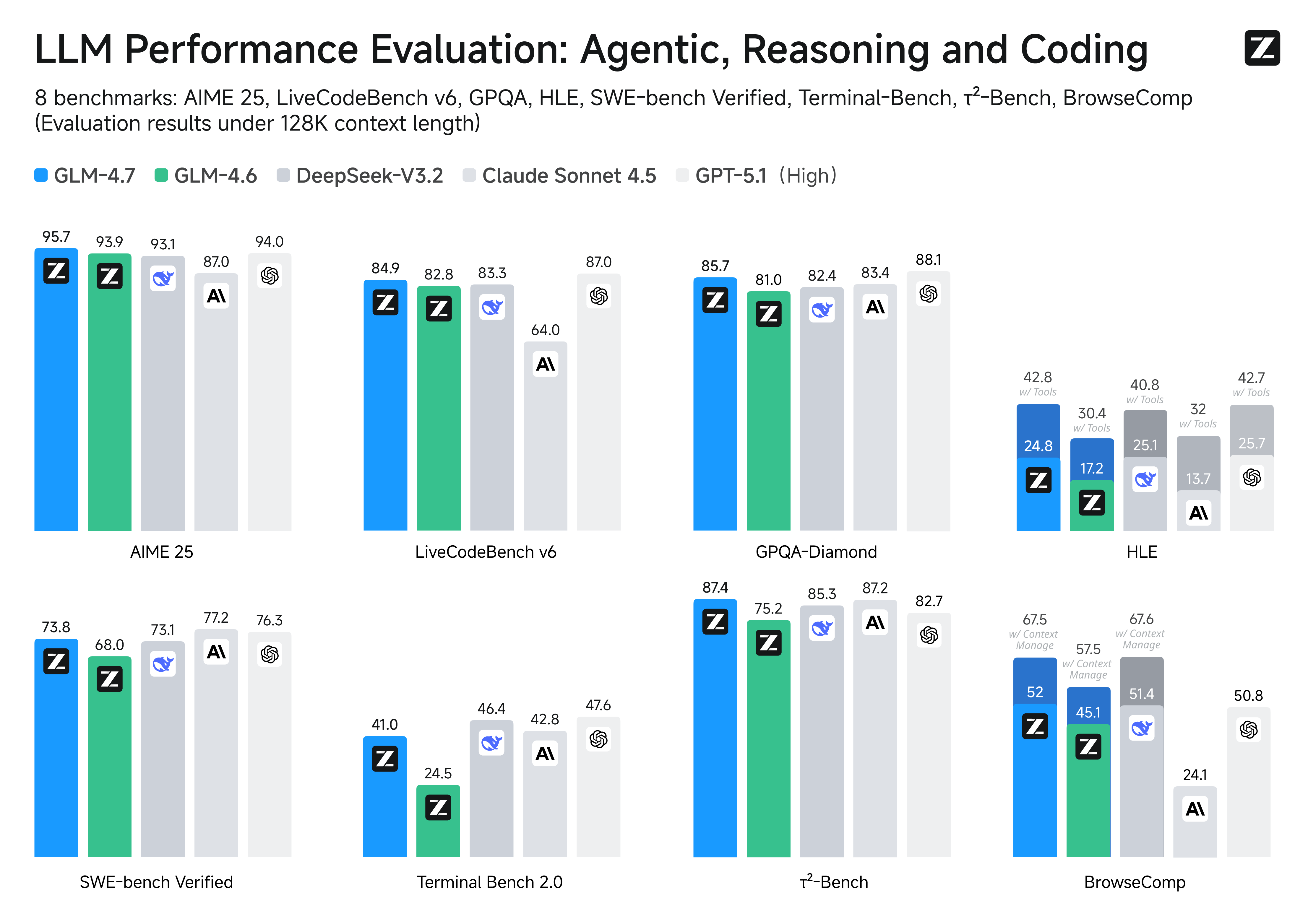

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

model.safetensors.index.json filter=lfs diff=lfs merge=lfs -text

|

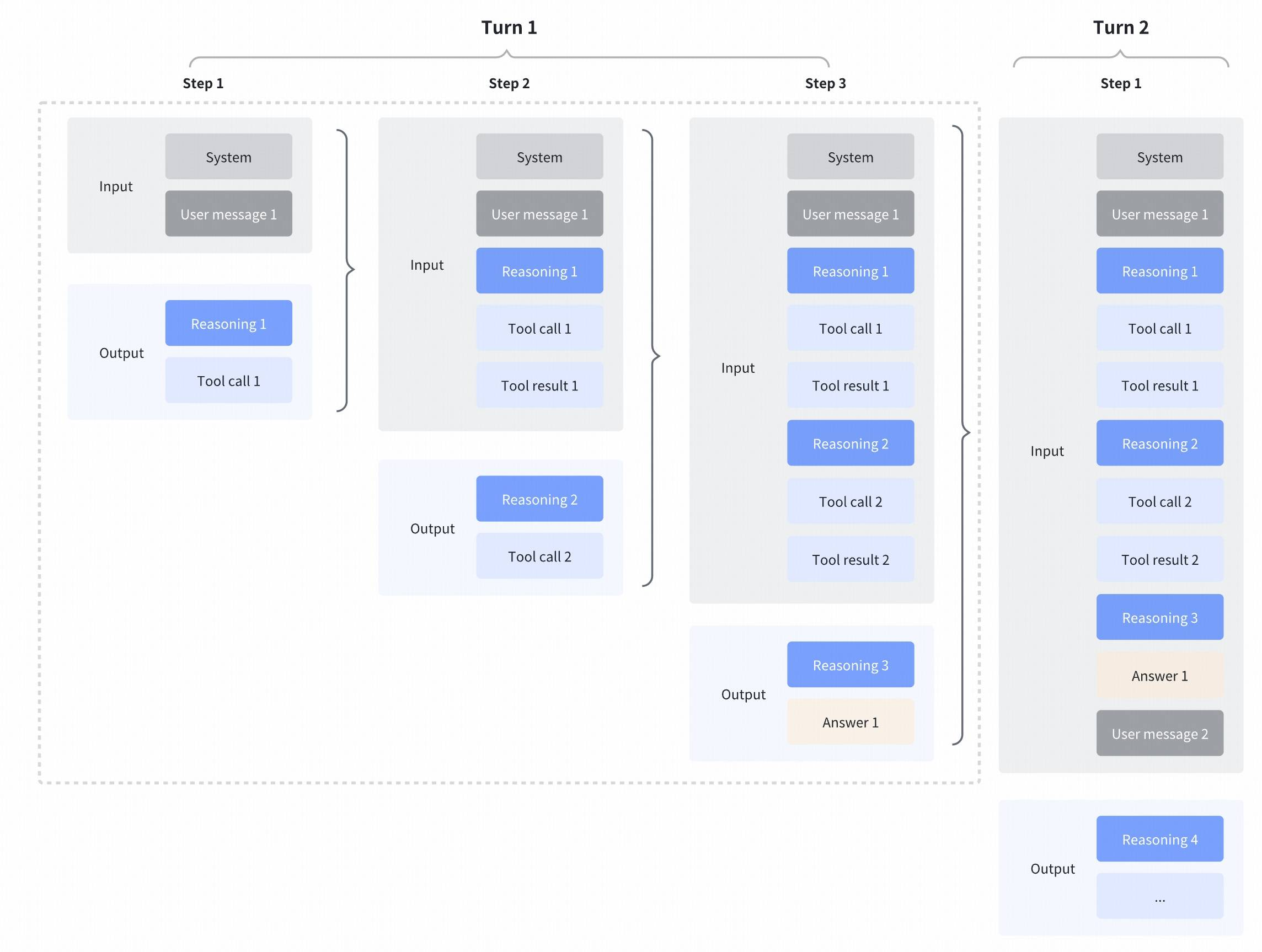

| 38 |

+

quantization_config.json filter=lfs diff=lfs merge=lfs -text

|

README.md

CHANGED

|

@@ -1,240 +1,217 @@

|

|

| 1 |

---

|

|

|

|

|

|

|

|

|

|

|

|

|

| 2 |

license: mit

|

| 3 |

-

base_model: zai-org/GLM-4.7

|

| 4 |

-

base_model_relation: quantized

|

| 5 |

-

quantization: exl3

|

| 6 |

pipeline_tag: text-generation

|

| 7 |

-

tags:

|

| 8 |

-

- exl3

|

| 9 |

-

library_name: exllamav3

|

| 10 |

---

|

| 11 |

|

| 12 |

-

# GLM

|

| 13 |

|

| 14 |

-

|

| 15 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 16 |

|

| 17 |

-

|

| 18 |

-

> ⚠️ Proper support for GLM-4.7 reasoning requires [PR #295 in TabbyAPI](https://github.com/theroyallab/tabbyAPI/pull/295#issuecomment-3689013998), see my modified Dockerfile.

|

| 19 |

|

| 20 |

-

|

| 21 |

-

- base quants (2, 3, 4, 5, 6, 8 bits) for Exllamav3 (using SOTA random Hadamard transforms and Trellis quantization for high-quality reconstruction)

|

| 22 |

-

- layer and tensor level KL-divergence measurements for bit-allocation optimization given a target size

|

| 23 |

-

- theoretical research related to quantization, in particular MoE quantization

|

| 24 |

|

| 25 |

-

|

|

|

|

|

|

|

|

|

|

| 26 |

|

| 27 |

-

|

| 28 |

-

- to provide the best possible quants for what is arguably the top general model of 2025

|

| 29 |

-

- to serve as a reference for quantization strategies (as of 2025 knowledge)

|

| 30 |

|

| 31 |

-

|

| 32 |

|

| 33 |

-

|

| 34 |

|

| 35 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 36 |

|

| 37 |

-

|

| 38 |

|

| 39 |

-

The base quants use the new "MCG" multiplier from https://github.com/turboderp-org/exllamav3/pull/26#issuecomment-3395345415

|

| 40 |

|

| 41 |

-

|

| 42 |

-

- Kullback-Leibler divergence (KL-div) and Top-K agreement measured through: https://github.com/turboderp-org/exllamav3/blob/v0.0.14/eval/model_diff.py

|

| 43 |

-

- Perplexity measured through: https://github.com/turboderp-org/exllamav3/blob/v0.0.14/eval/model_diff.py

|

| 44 |

-

- Caveat both quantization calibration and perplexity use the same dataset in EXL3, hence we have overfitting.\

|

| 45 |

-

The most appropriate measure for quality is KL-divergence (i.e. how well the quant reproduces the original probability distribution of token output, before samplers)\

|

| 46 |

-

For example the 3-bit quant have lower perplexity than the original FP16.\

|

| 47 |

|

| 48 |

-

|

| 49 |

-

| ---------------------------------------------------------------- | ------- | -------------------- | -------------------- | ---------- | ------ | ------ | ------ | ------ | ------ |

|

| 50 |

-

| [2bpw-H6](https://huggingface.co/mratsim/GLM-4.7-EXL3/tree/2bpw_H6) | 83 GiB | 0.65096196 | 0.75914080 | 9.36106675 | 0.7315 | 0.3852 | 0.1653 | 0.0628 | 0.0221 |

|

| 51 |

-

| [3bpw-H6](https://huggingface.co/mratsim/GLM-4.7-EXL3/tree/3bpw_H6) | 124 GiB | 0.27578034 | 0.28499938 | 6.95262863 | 0.8388 | 0.5717 | 0.3306 | 0.1713 | 0.0805 |

|

| 52 |

-

| [4bpw-H6](https://huggingface.co/mratsim/GLM-4.7-EXL3/tree/4bpw_H6) | 165 GiB | 0.13722391 | 0.13577676 | 6.60474035 | 0.8947 | 0.6948 | 0.4810 | 0.3007 | 0.1754 |

|

| 53 |

-

| 5bpw (pending) | 206 GiB | WIP | WIP | WIP | WIP | WIP | WIP | WIP | WIP |

|

| 54 |

-

| [6bpw-H8](https://huggingface.co/mratsim/GLM-4.7-EXL3/tree/6bpw_H8) | 247 GiB | 0.08202591 | 0.0784423 | 6.32611481 | 0.9334 | 0.7951 | 0.6274 | 0.4597 | 0.3190 |

|

| 55 |

-

| [8bpw-H8](https://huggingface.co/mratsim/GLM-4.7-EXL3/tree/8bpw_H8) | 328 GiB | 0.07552261 | 0.07230427 | 6.38240525 | 0.9396 | 0.8172 | 0.6598 | 0.5048 | 0.3666 |

|

| 56 |

-

| FP16 | 656 GiB | | | 6.49784813 | | | | | |

|

| 57 |

|

| 58 |

-

|

| 59 |

|

| 60 |

-

|

| 61 |

-

|

| 62 |

-

|

| 63 |

-

|

|

|

|

|

|

|

| 64 |

|

| 65 |

-

|

| 66 |

-

| -------------------------------------------------------------------------------- | ---------- | ----------------------------------------- | -------------------- | -------------------- | ---------- | ------ | ------ | ------ | ------ | ------ |

|

| 67 |

-

| [3.84bpw-tuned🂱](https://huggingface.co/mratsim/GLM-4.7-EXL3/tree/3.84bpw-tuned)| 158GiB GiB | 202752 tokens (max), k6v5 for 192GiB VRAM | 0.15823333 | 0.15401253 | 6.41935951 | 0.8854 | 0.6743 | 0.4587 | 0.2832 | 0.1638 |

|

| 68 |

-

| 4.16bpw-tuned🂱 (WIP)| 171GiB GiB | 107520 tokens, k5v4 for 192GiB VRAM | WIP | WIP | WIP | WIP | WIP | WIP | WIP | WIP |

|

| 69 |

|

| 70 |

-

|

| 71 |

-

- "tuned🂱" for hand-tuned quants

|

| 72 |

|

| 73 |

-

|

| 74 |

-

|

| 75 |

-

|

| 76 |

-

```

|

| 77 |

-

|

| 78 |

-

Unfortunately, as of December 2025 automatically optimized quants are not able to beat hand-tuned heuristics and research-based mixed-precision quantization for I suspect one of 2 reasons (or both):

|

| 79 |

-

- An optimization algorithm with no backtracking, i.e. single-pass but not comparing current layer importance with past layer importance.

|

| 80 |

-

- Not taking synergies into account. Just like LLMs have emergent properties with size, it might be that up-quantizing certain projections significantly improve KL-divergence even if it appears as noise if we only measure improvement of a single up-quant.

|

| 81 |

-

|

| 82 |

-

### Modified Dockerfile

|

| 83 |

-

|

| 84 |

-

You can build a custom tabbyAPI image that integrates PR #395:

|

| 85 |

|

| 86 |

-

|

| 87 |

-

# Use an official CUDA runtime with Ubuntu as a parent image

|

| 88 |

-

FROM nvidia/cuda:12.8.1-runtime-ubuntu24.04

|

| 89 |

|

| 90 |

-

|

| 91 |

-

RUN apt-get update && apt-get install -y --no-install-recommends \

|

| 92 |

-

build-essential \

|

| 93 |

-

curl \

|

| 94 |

-

ca-certificates \

|

| 95 |

-

python3.12 \

|

| 96 |

-

python3-pip \

|

| 97 |

-

python3.12-venv \

|

| 98 |

-

git \

|

| 99 |

-

&& rm -rf /var/lib/apt/lists/*

|

| 100 |

|

| 101 |

-

|

| 102 |

-

|

|

|

|

| 103 |

|

| 104 |

-

|

| 105 |

-

ENV PATH="/opt/venv/bin:$PATH"

|

| 106 |

|

| 107 |

-

|

| 108 |

-

|

| 109 |

|

| 110 |

-

|

| 111 |

-

WORKDIR /app

|

| 112 |

|

| 113 |

-

|

| 114 |

-

RUN git clone https://github.com/theroyallab/tabbyAPI.git /app

|

| 115 |

|

| 116 |

-

|

| 117 |

-

RUN git config --global user.email "docker@tabbyapi.local" && \

|

| 118 |

-

git config --global user.name "Docker Build"

|

| 119 |

|

| 120 |

-

# Fetch and merge PR #295

|

| 121 |

-

RUN git fetch origin pull/295/head:pr-295 && \

|

| 122 |

-

git merge --strategy-option theirs pr-295

|

| 123 |

|

| 124 |

-

|

| 125 |

-

RUN pip install --no-cache-dir .[cu12,extras]

|

| 126 |

|

| 127 |

-

|

| 128 |

-

EXPOSE 5000

|

| 129 |

|

| 130 |

-

|

| 131 |

-

ENTRYPOINT ["python3"]

|

| 132 |

|

| 133 |

-

|

| 134 |

-

|

| 135 |

```

|

| 136 |

|

| 137 |

-

|

| 138 |

-

|

| 139 |

-

Exllamav3 offers tools to measure per layer (with `-l2`) or even per-tensor (with `-l3`) contributions to KL-div improvements.

|

| 140 |

-

They might take 2 hours to 5 hours, if comparing 2 quants -- to 12 hours if comparing 3 quants -- to 24h of compute if comparing all quants.

|

| 141 |

-

|

| 142 |

-

> [!WARNING]

|

| 143 |

-

> ⚠️ Quantization in-progress, no measurements comparison available

|

| 144 |

-

|

| 145 |

-

The json file can be fed to https://github.com/turboderp-org/exllamav3/blob/v0.0.14/util/optimize.py with a target `bpw` to output an optimized quant.

|

| 146 |

-

|

| 147 |

-

Please note that from experimentations, manual tuning using the heuristics below can achieve better KL-divergence than optimizing by only mixing 3 quants and is less likely to overfit the calibration set. Having `shared experts` or `self_attn` layers use 6 or even 8-bit provide a very large improvement to KL-divergence. Even a measurement with all available quants currently doesn't achieve manual tuning results.

|

| 148 |

-

|

| 149 |

-

## Quantization theory and heuristics for manual tuning

|

| 150 |

-

|

| 151 |

-

<details>

|

| 152 |

-

<summary>In-depth overview of quantization theory and heuristics for manual tuning</summary>

|

| 153 |

-

|

| 154 |

-

### Layers to quantize

|

| 155 |

-

|

| 156 |

-

Quantization should be focused on Linear layers (also called Dense or Fully-Connected layers i.e. MatMul+Bias)

|

| 157 |

-

In particular quantizing LayerNorm/RMSnorm layer is strongly discouraged, see [1]

|

| 158 |

-

> LayerNorm in Quantization. Kovaleva et al. (2021); Wei et al. (2022) find that outliers in the

|

| 159 |

-

> LayerNorm parameters of BERT (Devlin et al., 2019) cause difficulties in model compression.

|

| 160 |

-

> Given the importance of LayerNorm, all the quantization methods we discuss above leave LayerNorm unquantized.

|

| 161 |

-

|

| 162 |

-

This is also reported in Intel and Nvidia repo:

|

| 163 |

-

- https://github.com/intel/neural-compressor/issues/1963#issuecomment-2274873441

|

| 164 |

-

- https://github.com/NVIDIA/TensorRT/issues/4084#issuecomment-2294513950

|

| 165 |

-

|

| 166 |

-

EXL3 can only quantize linear layers.

|

| 167 |

-

|

| 168 |

-

### Tensors to up-quantize

|

| 169 |

-

|

| 170 |

-

If there is enough bits, down projections should be prioritized.

|

| 171 |

|

| 172 |

-

|

| 173 |

-

|

| 174 |

-

|

| 175 |

-

> spikes in input and output. We also observe that RMSNorm propagate spikes through the entire model

|

| 176 |

-

|

| 177 |

-

According to [5]

|

| 178 |

-

> Figure 5(a) illustrates the extremal ratio across layers and modules in LLaMA2-7B, highlighting

|

| 179 |

-

> that weight outliers are concentrated in the down-projection matrices Wdown

|

| 180 |

-

> ℓ of the second layer and

|

| 181 |

-

> the last two layers. Figures 5(b) and 5(c) provide detailed visualizations of these outliers in the last

|

| 182 |

-

> two layers.

|

| 183 |

-

|

| 184 |

-

### Mixture-of-Experts quantization (MoE)

|

| 185 |

-

|

| 186 |

-

Mixture-of-Experts require specific quantization techniques.

|

| 187 |

-

|

| 188 |

-

#### Mixed-precision quantization

|

| 189 |

-

|

| 190 |

-

Some layers have a higher impact on LLM performance.

|

| 191 |

-

According to [2], spending more bits in attention layers results in large gain compared to spending them in FFN layers.

|

| 192 |

-

According to [3] on 2-bit quantization:

|

| 193 |

-

- quantizing expert FFN layers do not seriously impact model quality

|

| 194 |

-

- quantizing cross-attention has some impact

|

| 195 |

-

- quantizing self-attention has a large impact

|

| 196 |

-

- quantizing dense FFN has a very significant impact

|

| 197 |

-

|

| 198 |

-

Hence to preserve model quality we should choose not to quantize dense FFN layers and self-attention layers.

|

| 199 |

-

|

| 200 |

-

We notice that:

|

| 201 |

-

- official MXFP4 weights of gpt-oss-120b from OpenAI keep self-attention in BF16:

|

| 202 |

-

- https://huggingface.co/openai/gpt-oss-120b/blob/main/model.safetensors.index.json

|

| 203 |

-

- NVFP4 weights of DeepSeek-R1 quantized by Nvidia also keep self-attention in BF16:

|

| 204 |

-

- https://huggingface.co/nvidia/DeepSeek-R1-0528-FP4/blob/main/model.safetensors.index.json

|

| 205 |

-

|

| 206 |

-

#### Layers with high-impact

|

| 207 |

-

|

| 208 |

-

According to [2], giving more bits to the first `k` blocks have a significantly higher impact on model quality than for the same last `k` blocks.

|

| 209 |

-

|

| 210 |

-

#### Expert quantization

|

| 211 |

-

|

| 212 |

-

When quantizing MoE, quantizing activations is tricky as only a subset of experts are activated per request.

|

| 213 |

|

| 214 |

-

|

| 215 |

|

| 216 |

-

|

| 217 |

|

| 218 |

-

|

| 219 |

-

|

| 220 |

-

|

| 221 |

|

| 222 |

-

|

| 223 |

-

|

| 224 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 225 |

|

| 226 |

-

|

| 227 |

-

Young Jin Kim, Raffy Fahim, Hany Hassan Awadalla\

|

| 228 |

-

https://arxiv.org/pdf/2310.02410

|

| 229 |

|

| 230 |

-

|

| 231 |

-

|

| 232 |

-

|

| 233 |

-

|

| 234 |

-

|

|

|

|

|

|

|

|

|

|

| 235 |

|

| 236 |

-

|

| 237 |

-

|

| 238 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 239 |

|

| 240 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

---

|

| 2 |

+

language:

|

| 3 |

+

- en

|

| 4 |

+

- zh

|

| 5 |

+

library_name: transformers

|

| 6 |

license: mit

|

|

|

|

|

|

|

|

|

|

| 7 |

pipeline_tag: text-generation

|

|

|

|

|

|

|

|

|

|

| 8 |

---

|

| 9 |

|

| 10 |

+

# GLM-4.7

|

| 11 |

|

| 12 |

+

<div align="center">

|

| 13 |

+

<img src=https://raw.githubusercontent.com/zai-org/GLM-4.5/refs/heads/main/resources/logo.svg width="15%"/>

|

| 14 |

+

</div>

|

| 15 |

+

<p align="center">

|

| 16 |

+

👋 Join our <a href="https://discord.gg/QR7SARHRxK" target="_blank">Discord</a> community.

|

| 17 |

+

<br>

|

| 18 |

+

📖 Check out the GLM-4.7 <a href="https://z.ai/blog/glm-4.7" target="_blank">technical blog</a>, <a href="https://arxiv.org/abs/2508.06471" target="_blank">technical report(GLM-4.5)</a>.

|

| 19 |

+

<br>

|

| 20 |

+

📍 Use GLM-4.7 API services on <a href="https://docs.z.ai/guides/llm/glm-4.7">Z.ai API Platform. </a>

|

| 21 |

+

<br>

|

| 22 |

+

👉 One click to <a href="https://chat.z.ai">GLM-4.7</a>.

|

| 23 |

+

</p>

|

| 24 |

|

| 25 |

+

## Introduction

|

|

|

|

| 26 |

|

| 27 |

+

**GLM-4.7**, your new coding partner, is coming with the following features:

|

|

|

|

|

|

|

|

|

|

| 28 |

|

| 29 |

+

- **Core Coding**: GLM-4.7 brings clear gains, compared to its predecessor GLM-4.6, in multilingual agentic coding and terminal-based tasks, including (73.8%, +5.8%) on SWE-bench, (66.7%, +12.9%) on SWE-bench Multilingual, and (41%, +16.5%) on Terminal Bench 2.0. GLM-4.7 also supports thinking before acting, with significant improvements on complex tasks in mainstream agent frameworks such as Claude Code, Kilo Code, Cline, and Roo Code.

|

| 30 |

+

- **Vibe Coding**: GLM-4.7 takes a big step forward in improving UI quality. It produces cleaner, more modern webpages and generates better-looking slides with more accurate layout and sizing.

|

| 31 |

+

- **Tool Using**: GLM-4.7 achieves significantly improvements in Tool using. Significant better performances can be seen on benchmarks such as τ^2-Bench and on web browsing via BrowseComp.

|

| 32 |

+

- **Complex Reasoning**: GLM-4.7 delivers a substantial boost in mathematical and reasoning capabilities, achieving (42.8%, +12.4%) on the HLE (Humanity’s Last Exam) benchmark compared to GLM-4.6.

|

| 33 |

|

| 34 |

+

You can also see significant improvements in many other scenarios such as chat, creative writing, and role-play scenario.

|

|

|

|

|

|

|

| 35 |

|

| 36 |

+

|

| 37 |

|

| 38 |

+

**Performances on Benchmarks.** More detailed comparisons of GLM-4.7 with other models GPT-5-High, GPT-5.1-High, Claude Sonnet 4.5, Gemini 3.0 Pro, DeepSeek-V3.2, Kimi K2 Thinking, on 17 benchmarks (including 8 reasoning, 5 coding, and 3 agents benchmarks) can be seen in the below table.

|

| 39 |

|

| 40 |

+

| Benchmark | GLM-4.7 | GLM-4.6 | Kimi K2 Thinking | DeepSeek-V3.2 | Gemini 3.0 Pro | Claude Sonnet 4.5 | GPT-5-High | GPT-5.1-High |

|

| 41 |

+

|:-------------------------------|:-------:|:-------:|:----------------:|:-------------:|:--------------:|:-----------------:|:----------:|:------------:|

|

| 42 |

+

| MMLU-Pro | 84.3 | 83.2 | 84.6 | 85.0 | 90.1 | 88.2 | 87.5 | 87.0 |

|

| 43 |

+

| GPQA-Diamond | 85.7 | 81.0 | 84.5 | 82.4 | 91.9 | 83.4 | 85.7 | 88.1 |

|

| 44 |

+

| HLE | 24.8 | 17.2 | 23.9 | 25.1 | 37.5 | 13.7 | 26.3 | 25.7 |

|

| 45 |

+

| HLE (w/ Tools) | 42.8 | 30.4 | 44.9 | 40.8 | 45.8 | 32.0 | 35.2 | 42.7 |

|

| 46 |

+

| AIME 2025 | 95.7 | 93.9 | 94.5 | 93.1 | 95.0 | 87.0 | 94.6 | 94.0 |

|

| 47 |

+

| HMMT Feb. 2025 | 97.1 | 89.2 | 89.4 | 92.5 | 97.5 | 79.2 | 88.3 | 96.3 |

|

| 48 |

+

| HMMT Nov. 2025 | 93.5 | 87.7 | 89.2 | 90.2 | 93.3 | 81.7 | 89.2 | - |

|

| 49 |

+

| IMOAnswerBench | 82.0 | 73.5 | 78.6 | 78.3 | 83.3 | 65.8 | 76.0 | - |

|

| 50 |

+

| LiveCodeBench-v6 | 84.9 | 82.8 | 83.1 | 83.3 | 90.7 | 64.0 | 87.0 | 87.0 |

|

| 51 |

+

| SWE-bench Verified | 73.8 | 68.0 | 71.3 | 73.1 | 76.2 | 77.2 | 74.9 | 76.3 |

|

| 52 |

+

| SWE-bench Multilingual | 66.7 | 53.8 | 61.1 | 70.2 | - | 68.0 | 55.3 | - |

|

| 53 |

+

| Terminal Bench Hard | 33.3 | 23.6 | 30.6 | 35.4 | 39.0 | 33.3 | 30.5 | 43.0 |

|

| 54 |

+

| Terminal Bench 2.0 | 41.0 | 24.5 | 35.7 | 46.4 | 54.2 | 42.8 | 35.2 | 47.6 |

|

| 55 |

+

| BrowseComp | 52.0 | 45.1 | - | 51.4 | - | 24.1 | 54.9 | 50.8 |

|

| 56 |

+

| BrowseComp (w/ Context Manage) | 67.5 | 57.5 | 60.2 | 67.6 | 59.2 | - | - | - |

|

| 57 |

+

| BrowseComp-Zh | 66.6 | 49.5 | 62.3 | 65.0 | - | 42.4 | 63.0 | - |

|

| 58 |

+

| τ²-Bench | 87.4 | 75.2 | 74.3 | 85.3 | 90.7 | 87.2 | 82.4 | 82.7 |

|

| 59 |

|

| 60 |

+

> **Coding:** AGI is a long journey, and benchmarks are only one way to evaluate performance. While the metrics provide necessary checkpoints, the most important thing is still how it *feels*. True intelligence isn't just about acing a test or processing data faster; ultimately, the success of AGI will be measured by how seamlessly it integrates into our lives-**"coding"** this time.

|

| 61 |

|

|

|

|

| 62 |

|

| 63 |

+

## Getting started with GLM-4.7

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 64 |

|

| 65 |

+

### Interleaved Thinking & Preserved Thinking

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 66 |

|

| 67 |

+

|

| 68 |

|

| 69 |

+

GLM-4.7 further enhances **Interleaved Thinking** (a feature introduced since GLM-4.5) and introduces **Preserved Thinking** and **Turn-level Thinking**. By thinking between actions and staying consistent across turns, it makes complex tasks more stable and more controllable:

|

| 70 |

+

- **Interleaved Thinking**: The model thinks before every response and tool calling, improving instruction following and the quality of generation.

|

| 71 |

+

- **Preserved Thinking**: In coding agent scenarios, the model automatically retains all thinking blocks across multi-turn conversations, reusing the existing reasoning instead of re-deriving from scratch. This reduces information loss and inconsistencies, and is well-suited for long-horizon, complex tasks.

|

| 72 |

+

- **Turn-level Thinking**: The model supports per-turn control over reasoning within a session—disable thinking for lightweight requests to reduce latency/cost, enable it for complex tasks to improve accuracy and stability.

|

| 73 |

+

|

| 74 |

+

More details: https://docs.z.ai/guides/capabilities/thinking-mode

|

| 75 |

|

| 76 |

+

### Evaluation Parameters

|

|

|

|

|

|

|

|

|

|

| 77 |

|

| 78 |

+

**Default Settings (Most Tasks)**

|

|

|

|

| 79 |

|

| 80 |

+

* temperature: `1.0`

|

| 81 |

+

* top-p: `0.95`

|

| 82 |

+

* max new tokens: `131072`

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 83 |

|

| 84 |

+

For multi-turn agentic tasks (τ²-Bench and Terminal Bench 2), please turn on [Preserved Thinking mode](https://docs.z.ai/guides/capabilities/thinking-mode).

|

|

|

|

|

|

|

| 85 |

|

| 86 |

+

**Terminal Bench, SWE Bench Verified**

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 87 |

|

| 88 |

+

* temperature: `0.7`

|

| 89 |

+

* top-p: `1.0`

|

| 90 |

+

* max new tokens: `16384`

|

| 91 |

|

| 92 |

+

**τ^2-Bench**

|

|

|

|

| 93 |

|

| 94 |

+

* Temperature: `0`

|

| 95 |

+

* Max new tokens: `16384`

|

| 96 |

|

| 97 |

+

For τ^2-Bench evaluation, we added an additional prompt to the Retail and Telecom user interaction to avoid failure modes caused by users ending the interaction incorrectly. For the Airline domain, we applied the domain fixes as proposed in the [Claude Opus 4.5](https://assets.anthropic.com/m/64823ba7485345a7/Claude-Opus-4-5-System-Card.pdf) release report.

|

|

|

|

| 98 |

|

| 99 |

+

## Serve GLM-4.7 Locally

|

|

|

|

| 100 |

|

| 101 |

+

For local deployment, GLM-4.7 supports inference frameworks including vLLM and SGLang. Comprehensive deployment instructions are available in the official [Github](https://github.com/zai-org/GLM-4.5) repository.

|

|

|

|

|

|

|

| 102 |

|

|

|

|

|

|

|

|

|

|

| 103 |

|

| 104 |

+

vLLM and SGLang only support GLM-4.7 on their main branches. you can use their official docker images for inference.

|

|

|

|

| 105 |

|

| 106 |

+

### vLLM

|

|

|

|

| 107 |

|

| 108 |

+

Using Docker as:

|

|

|

|

| 109 |

|

| 110 |

+

```shell

|

| 111 |

+

docker pull vllm/vllm-openai:nightly

|

| 112 |

```

|

| 113 |

|

| 114 |

+

or using pip (must use pypi.org as the index url):

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 115 |

|

| 116 |

+

```shell

|

| 117 |

+

pip install -U vllm --pre --index-url https://pypi.org/simple --extra-index-url https://wheels.vllm.ai/nightly

|

| 118 |

+

```

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 119 |

|

| 120 |

+

### SGLang

|

| 121 |

|

| 122 |

+

Using Docker as:

|

| 123 |

|

| 124 |

+

```shell

|

| 125 |

+

docker pull lmsysorg/sglang:dev

|

| 126 |

+

```

|

| 127 |

|

| 128 |

+

or using pip install sglang from source.

|

| 129 |

+

|

| 130 |

+

|

| 131 |

+

### transformers

|

| 132 |

+

|

| 133 |

+

using with transformers as `4.57.3` and then run:

|

| 134 |

+

|

| 135 |

+

```python

|

| 136 |

+

import torch

|

| 137 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 138 |

+

|

| 139 |

+

MODEL_PATH = "zai-org/GLM-4.7"

|

| 140 |

+

messages = [{"role": "user", "content": "hello"}]

|

| 141 |

+

tokenizer = AutoTokenizer.from_pretrained(MODEL_PATH)

|

| 142 |

+

inputs = tokenizer.apply_chat_template(

|

| 143 |

+

messages,

|

| 144 |

+

tokenize=True,

|

| 145 |

+

add_generation_prompt=True,

|

| 146 |

+

return_dict=True,

|

| 147 |

+

return_tensors="pt",

|

| 148 |

+

)

|

| 149 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 150 |

+

pretrained_model_name_or_path=MODEL_PATH,

|

| 151 |

+

torch_dtype=torch.bfloat16,

|

| 152 |

+

device_map="auto",

|

| 153 |

+

)

|

| 154 |

+

inputs = inputs.to(model.device)

|

| 155 |

+

generated_ids = model.generate(**inputs, max_new_tokens=128, do_sample=False)

|

| 156 |

+

output_text = tokenizer.decode(generated_ids[0][inputs.input_ids.shape[1] :])

|

| 157 |

+

print(output_text)

|

| 158 |

+

```

|

| 159 |

|

| 160 |

+

### vLLM

|

|

|

|

|

|

|

| 161 |

|

| 162 |

+

```shell

|

| 163 |

+

vllm serve zai-org/GLM-4.7-FP8 \

|

| 164 |

+

--tensor-parallel-size 8 \

|

| 165 |

+

--tool-call-parser glm47 \

|

| 166 |

+

--reasoning-parser glm45 \

|

| 167 |

+

--enable-auto-tool-choice \

|

| 168 |

+

--served-model-name glm-4.7-fp8

|

| 169 |

+

```

|

| 170 |

|

| 171 |

+

### SGLang

|

| 172 |

+

|

| 173 |

+

```shell

|

| 174 |

+

python3 -m sglang.launch_server \

|

| 175 |

+

--model-path zai-org/GLM-4.7-FP8 \

|

| 176 |

+

--tp-size 8 \

|

| 177 |

+

--tool-call-parser glm47 \

|

| 178 |

+

--reasoning-parser glm45 \

|

| 179 |

+

--speculative-algorithm EAGLE \

|

| 180 |

+

--speculative-num-steps 3 \

|

| 181 |

+

--speculative-eagle-topk 1 \

|

| 182 |

+

--speculative-num-draft-tokens 4 \

|

| 183 |

+

--mem-fraction-static 0.8 \

|

| 184 |

+

--served-model-name glm-4.7-fp8 \

|

| 185 |

+

--host 0.0.0.0 \

|

| 186 |

+

--port 8000

|

| 187 |

+

```

|

| 188 |

|

| 189 |

+

### Parameter Instructions

|

| 190 |

+

|

| 191 |

+

- For agentic tasks of GLM-4.7, please turn on [Preserved Thinking mode](https://docs.z.ai/guides/capabilities/thinking-mode) by adding the following config (only sglang support):

|

| 192 |

+

|

| 193 |

+

```

|

| 194 |

+

"chat_template_kwargs": {

|

| 195 |

+

"enable_thinking": true,

|

| 196 |

+

"clear_thinking": false

|

| 197 |

+

}

|

| 198 |

+

```

|

| 199 |

+

|

| 200 |

+

- When using `vLLM` and `SGLang`, thinking mode is enabled by default when sending requests. If you want to disable the thinking switch, you need to add the `extra_body={"chat_template_kwargs": {"enable_thinking": False}}` parameter.

|

| 201 |

+

- Both support tool calling. Please use OpenAI-style tool description format for calls.

|

| 202 |

+

|

| 203 |

+

|

| 204 |

+

## Citation

|

| 205 |

+

|

| 206 |

+

If you find our work useful in your research, please consider citing the following paper:

|

| 207 |

+

|

| 208 |

+

```bibtex

|

| 209 |

+

@misc{5team2025glm45agenticreasoningcoding,

|

| 210 |

+

title={GLM-4.5: Agentic, Reasoning, and Coding (ARC) Foundation Models},

|

| 211 |

+

author={GLM Team and Aohan Zeng and Xin Lv and Qinkai Zheng and Zhenyu Hou and Bin Chen and Chengxing Xie and Cunxiang Wang and Da Yin and Hao Zeng and Jiajie Zhang and Kedong Wang and Lucen Zhong and Mingdao Liu and Rui Lu and Shulin Cao and Xiaohan Zhang and Xuancheng Huang and Yao Wei and Yean Cheng and Yifan An and Yilin Niu and Yuanhao Wen and Yushi Bai and Zhengxiao Du and Zihan Wang and Zilin Zhu and Bohan Zhang and Bosi Wen and Bowen Wu and Bowen Xu and Can Huang and Casey Zhao and Changpeng Cai and Chao Yu and Chen Li and Chendi Ge and Chenghua Huang and Chenhui Zhang and Chenxi Xu and Chenzheng Zhu and Chuang Li and Congfeng Yin and Daoyan Lin and Dayong Yang and Dazhi Jiang and Ding Ai and Erle Zhu and Fei Wang and Gengzheng Pan and Guo Wang and Hailong Sun and Haitao Li and Haiyang Li and Haiyi Hu and Hanyu Zhang and Hao Peng and Hao Tai and Haoke Zhang and Haoran Wang and Haoyu Yang and He Liu and He Zhao and Hongwei Liu and Hongxi Yan and Huan Liu and Huilong Chen and Ji Li and Jiajing Zhao and Jiamin Ren and Jian Jiao and Jiani Zhao and Jianyang Yan and Jiaqi Wang and Jiayi Gui and Jiayue Zhao and Jie Liu and Jijie Li and Jing Li and Jing Lu and Jingsen Wang and Jingwei Yuan and Jingxuan Li and Jingzhao Du and Jinhua Du and Jinxin Liu and Junkai Zhi and Junli Gao and Ke Wang and Lekang Yang and Liang Xu and Lin Fan and Lindong Wu and Lintao Ding and Lu Wang and Man Zhang and Minghao Li and Minghuan Xu and Mingming Zhao and Mingshu Zhai and Pengfan Du and Qian Dong and Shangde Lei and Shangqing Tu and Shangtong Yang and Shaoyou Lu and Shijie Li and Shuang Li and Shuang-Li and Shuxun Yang and Sibo Yi and Tianshu Yu and Wei Tian and Weihan Wang and Wenbo Yu and Weng Lam Tam and Wenjie Liang and Wentao Liu and Xiao Wang and Xiaohan Jia and Xiaotao Gu and Xiaoying Ling and Xin Wang and Xing Fan and Xingru Pan and Xinyuan Zhang and Xinze Zhang and Xiuqing Fu and Xunkai Zhang and Yabo Xu and Yandong Wu and Yida Lu and Yidong Wang and Yilin Zhou and Yiming Pan and Ying Zhang and Yingli Wang and Yingru Li and Yinpei Su and Yipeng Geng and Yitong Zhu and Yongkun Yang and Yuhang Li and Yuhao Wu and Yujiang Li and Yunan Liu and Yunqing Wang and Yuntao Li and Yuxuan Zhang and Zezhen Liu and Zhen Yang and Zhengda Zhou and Zhongpei Qiao and Zhuoer Feng and Zhuorui Liu and Zichen Zhang and Zihan Wang and Zijun Yao and Zikang Wang and Ziqiang Liu and Ziwei Chai and Zixuan Li and Zuodong Zhao and Wenguang Chen and Jidong Zhai and Bin Xu and Minlie Huang and Hongning Wang and Juanzi Li and Yuxiao Dong and Jie Tang},

|

| 212 |

+

year={2025},

|

| 213 |

+

eprint={2508.06471},

|

| 214 |

+

archivePrefix={arXiv},

|

| 215 |

+

primaryClass={cs.CL},

|

| 216 |

+

url={https://arxiv.org/abs/2508.06471},

|

| 217 |

+

}

|

chat_template.jinja

ADDED

|

@@ -0,0 +1,86 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

[gMASK]<sop>

|

| 2 |

+

{%- if tools -%}

|

| 3 |

+

<|system|>

|

| 4 |

+

# Tools

|

| 5 |

+

|

| 6 |

+

You may call one or more functions to assist with the user query.

|

| 7 |

+

|

| 8 |

+

You are provided with function signatures within <tools></tools> XML tags:

|

| 9 |

+

<tools>

|

| 10 |

+

{% for tool in tools %}

|

| 11 |

+

{{ tool | tojson(ensure_ascii=False) }}

|

| 12 |

+

{% endfor %}

|

| 13 |

+

</tools>

|

| 14 |

+

|

| 15 |

+

For each function call, output the function name and arguments within the following XML format:

|

| 16 |

+

<tool_call>{function-name}<arg_key>{arg-key-1}</arg_key><arg_value>{arg-value-1}</arg_value><arg_key>{arg-key-2}</arg_key><arg_value>{arg-value-2}</arg_value>...</tool_call>{%- endif -%}

|

| 17 |

+

{%- macro visible_text(content) -%}

|

| 18 |

+

{%- if content is string -%}

|

| 19 |

+

{{- content }}

|

| 20 |

+

{%- elif content is iterable and content is not mapping -%}

|

| 21 |

+

{%- for item in content -%}

|

| 22 |

+

{%- if item is mapping and item.type == 'text' -%}

|

| 23 |

+

{{- item.text }}

|

| 24 |

+

{%- elif item is string -%}

|

| 25 |

+

{{- item }}

|

| 26 |

+

{%- endif -%}

|

| 27 |

+

{%- endfor -%}

|

| 28 |

+

{%- else -%}

|

| 29 |

+

{{- content }}

|

| 30 |

+

{%- endif -%}

|

| 31 |

+

{%- endmacro -%}

|

| 32 |

+

{%- set ns = namespace(last_user_index=-1) %}

|

| 33 |

+

{%- for m in messages %}

|

| 34 |

+

{%- if m.role == 'user' %}

|

| 35 |

+

{% set ns.last_user_index = loop.index0 -%}

|

| 36 |

+

{%- endif %}

|

| 37 |

+

{%- endfor %}

|

| 38 |

+

{% for m in messages %}

|

| 39 |

+

{%- if m.role == 'user' -%}<|user|>{{ visible_text(m.content) }}

|

| 40 |

+

{%- elif m.role == 'assistant' -%}

|

| 41 |

+

<|assistant|>

|

| 42 |

+

{%- set reasoning_content = '' %}

|

| 43 |

+

{%- set content = visible_text(m.content) %}

|

| 44 |

+

{%- if m.reasoning_content is string %}

|

| 45 |

+

{%- set reasoning_content = m.reasoning_content %}

|

| 46 |

+

{%- else %}

|

| 47 |

+

{%- if '</think>' in content %}

|

| 48 |

+

{%- set reasoning_content = content.split('</think>')[0].rstrip('\n').split('<think>')[-1].lstrip('\n') %}

|

| 49 |

+

{%- set content = content.split('</think>')[-1].lstrip('\n') %}

|

| 50 |

+

{%- endif %}

|

| 51 |

+

{%- endif %}

|

| 52 |

+

{%- if ((clear_thinking is defined and not clear_thinking) or loop.index0 > ns.last_user_index) and reasoning_content -%}

|

| 53 |

+

{{ '<think>' + reasoning_content.strip() + '</think>'}}

|

| 54 |

+

{%- else -%}

|

| 55 |

+

{{ '</think>' }}

|

| 56 |

+

{%- endif -%}

|

| 57 |

+

{%- if content.strip() -%}

|

| 58 |

+

{{ content.strip() }}

|

| 59 |

+

{%- endif -%}

|

| 60 |

+

{% if m.tool_calls %}

|

| 61 |

+

{% for tc in m.tool_calls %}

|

| 62 |

+

{%- if tc.function %}

|

| 63 |

+

{%- set tc = tc.function %}

|

| 64 |

+

{%- endif %}

|

| 65 |

+

{{- '<tool_call>' + tc.name -}}

|

| 66 |

+

{% set _args = tc.arguments %}{% for k, v in _args.items() %}<arg_key>{{ k }}</arg_key><arg_value>{{ v | tojson(ensure_ascii=False) if v is not string else v }}</arg_value>{% endfor %}</tool_call>{% endfor %}

|

| 67 |

+

{% endif %}

|

| 68 |

+

{%- elif m.role == 'tool' -%}

|

| 69 |

+

{%- if m.content is string -%}

|

| 70 |

+

{%- if loop.first or (messages[loop.index0 - 1].role != "tool") %}

|

| 71 |

+

{{- '<|observation|>' }}

|

| 72 |

+

{%- endif %}

|

| 73 |

+

{{- '<tool_response>' }}

|

| 74 |

+

{{- m.content }}

|

| 75 |

+

{{- '</tool_response>' }}

|

| 76 |

+

{%- else -%}

|

| 77 |

+

<|observation|>{% for tr in m.content %}

|

| 78 |

+

<tool_response>{{ tr.output if tr.output is defined else tr }}</tool_response>{% endfor -%}

|

| 79 |

+

{% endif -%}

|

| 80 |

+

{%- elif m.role == 'system' -%}

|

| 81 |

+

<|system|>{{ visible_text(m.content) }}

|

| 82 |

+

{%- endif -%}

|

| 83 |

+

{%- endfor -%}

|

| 84 |

+

{%- if add_generation_prompt -%}

|

| 85 |

+

<|assistant|>{{- '</think>' if (enable_thinking is defined and not enable_thinking) else '<think>' -}}

|

| 86 |

+

{%- endif -%}

|

config.json

ADDED

|

@@ -0,0 +1,55 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Glm4MoeForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"attention_bias": true,

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"pad_token_id": 151329,

|

| 8 |

+

"eos_token_id": [

|

| 9 |

+

151329,

|

| 10 |

+

151336,

|

| 11 |

+

151338

|

| 12 |

+

],

|

| 13 |

+

"head_dim": 128,

|

| 14 |

+

"hidden_act": "silu",

|

| 15 |

+

"hidden_size": 5120,

|

| 16 |

+

"partial_rotary_factor": 0.5,

|

| 17 |

+

"initializer_range": 0.02,

|

| 18 |

+

"intermediate_size": 12288,

|

| 19 |

+

"max_position_embeddings": 202752,

|

| 20 |

+

"model_type": "glm4_moe",

|

| 21 |

+

"moe_intermediate_size": 1536,

|

| 22 |

+

"norm_topk_prob": true,

|

| 23 |

+

"num_attention_heads": 96,

|

| 24 |

+

"n_group": 1,

|

| 25 |

+

"topk_group": 1,

|

| 26 |

+

"n_routed_experts": 160,

|

| 27 |

+

"n_shared_experts": 1,

|

| 28 |

+

"routed_scaling_factor": 2.5,

|

| 29 |

+

"num_experts_per_tok": 8,

|

| 30 |

+

"first_k_dense_replace": 3,

|

| 31 |

+

"num_hidden_layers": 92,

|

| 32 |

+

"num_key_value_heads": 8,

|

| 33 |

+

"rms_norm_eps": 1e-05,

|

| 34 |

+

"rope_scaling": null,

|

| 35 |

+

"rope_theta": 1000000,

|

| 36 |

+

"num_nextn_predict_layers": 1,

|

| 37 |

+

"tie_word_embeddings": false,

|

| 38 |

+

"torch_dtype": "bfloat16",

|

| 39 |

+

"transformers_version": "4.54.0",

|

| 40 |

+

"use_cache": true,

|

| 41 |

+

"use_qk_norm": true,

|

| 42 |

+

"vocab_size": 151552,

|

| 43 |

+

"quantization_config": {

|

| 44 |

+

"quant_method": "exl3",

|

| 45 |

+

"version": "0.0.18",

|

| 46 |

+

"bits": 2.1,

|

| 47 |

+

"head_bits": 6,

|

| 48 |

+

"calibration": {

|

| 49 |

+

"rows": 250,

|

| 50 |

+

"cols": 2048

|

| 51 |

+

},

|

| 52 |

+

"out_scales": "auto",

|

| 53 |

+

"codebook": "mcg"

|

| 54 |

+

}

|

| 55 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,11 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"eos_token_id": [

|

| 4 |

+

151329,

|

| 5 |

+

151336,

|

| 6 |

+

151338

|

| 7 |

+

],

|

| 8 |

+

"pad_token_id": 151329,

|

| 9 |

+

"temperature": 1.0,

|

| 10 |

+

"transformers_version": "4.56.2"

|

| 11 |

+

}

|

model-00001-of-00012.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1e9d9ddc770295da2a8a0c32f7d3780158191d3503fac24abf0c0ebb688a1793

|

| 3 |

+

size 8221096612

|

model-00002-of-00012.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:669aeb78753a9dd2696c5d86e11dec0c3c768d02204052fee9a2b9b0596c1510

|

| 3 |

+

size 8241126888

|

model-00003-of-00012.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:03ccd5c80b86c415e2ac16be4eaf96cca0cbdd45edc4588e2858a75e05c15c48

|

| 3 |

+

size 8241128840

|

model-00004-of-00012.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:024b737a20245dfed3e6acbfb502717e1ac73138c95ba0e730806d1b96218dfd

|

| 3 |

+

size 8241128840

|

model-00005-of-00012.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ce1e9b29e616aa9169ab9d6dc033449077fc257d83ea9d83c325df3c25228254

|

| 3 |

+

size 8241128840

|

model-00006-of-00012.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5fd50abb08b1e36472224951a7b4b47ac39dc28788360d4917acb87b4380901a

|

| 3 |

+

size 8241128840

|

model-00007-of-00012.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2693577dbeac7e96b5d76b3ee110273ba831299c9375a13d58591fffb17b1f72

|

| 3 |

+

size 8241128840

|

model-00008-of-00012.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e7679d4cf73bafe48aa345041927abea0b3af8cbe4925be900f813948c4123b4

|

| 3 |

+

size 8241128840

|

model-00009-of-00012.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:89e8a3207f354f4bdb21a9829c758def406d543e3c5a9eee062f332b378ff544

|

| 3 |

+

size 8241128840

|

model-00010-of-00012.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2b9de52c57d295c6fa0226cbb6a8807a18762339e03cd378ae568f8fa2e8bd92

|

| 3 |

+

size 8241128840

|

model-00011-of-00012.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:7c2b1e28f1e309c17b3d33d6bff2df80e2bd909985d1c458188f4c75a28c9389

|

| 3 |

+

size 8241128840

|

model-00012-of-00012.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9c67e3df2ec10aea8703d2c55588fa0a8932250b30a967a6108b6d8d3a097945

|

| 3 |

+

size 3672704016

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e80000f110fe064d9909d4b32d1df08fac02e2ece92548ad37d5d6cf462bdf6f

|

| 3 |

+

size 16120608

|

quantization_config.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:85a784cfc237ffe8fe309ded203ebcc0173d746b66be5243e367a2d1efc4b9fc

|

| 3 |

+

size 53400800

|

tokenizer.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:9340665016419c825c4bdabbcc9acc43b7ca2c68ce142724afa829abb1be5efd

|

| 3 |

+

size 19970699

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,325 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"added_tokens_decoder": {

|

| 3 |

+

"151329": {

|

| 4 |

+

"content": "<|endoftext|>",

|

| 5 |

+

"lstrip": false,

|

| 6 |

+

"normalized": false,

|

| 7 |

+

"rstrip": false,

|

| 8 |

+

"single_word": false,

|

| 9 |

+

"special": true

|

| 10 |

+

},

|

| 11 |

+

"151330": {

|

| 12 |

+

"content": "[MASK]",

|

| 13 |

+

"lstrip": false,

|

| 14 |

+

"normalized": false,

|

| 15 |

+

"rstrip": false,

|

| 16 |

+

"single_word": false,

|

| 17 |

+

"special": true

|

| 18 |

+

},

|

| 19 |

+

"151331": {

|

| 20 |

+

"content": "[gMASK]",

|

| 21 |

+

"lstrip": false,

|

| 22 |

+

"normalized": false,

|

| 23 |

+

"rstrip": false,

|

| 24 |

+

"single_word": false,

|

| 25 |

+

"special": true

|

| 26 |

+

},

|

| 27 |

+

"151332": {

|

| 28 |

+

"content": "[sMASK]",

|

| 29 |

+

"lstrip": false,

|

| 30 |

+

"normalized": false,

|

| 31 |

+

"rstrip": false,

|

| 32 |

+

"single_word": false,

|

| 33 |

+

"special": true

|

| 34 |

+

},

|

| 35 |

+

"151333": {

|

| 36 |

+

"content": "<sop>",

|

| 37 |

+

"lstrip": false,

|

| 38 |

+

"normalized": false,

|

| 39 |

+

"rstrip": false,

|

| 40 |

+

"single_word": false,

|

| 41 |

+

"special": true

|

| 42 |

+

},

|

| 43 |

+

"151334": {

|

| 44 |

+

"content": "<eop>",

|

| 45 |

+

"lstrip": false,

|

| 46 |

+

"normalized": false,

|

| 47 |

+

"rstrip": false,

|

| 48 |

+

"single_word": false,

|

| 49 |

+

"special": true

|

| 50 |

+

},

|

| 51 |

+

"151335": {

|

| 52 |

+

"content": "<|system|>",

|

| 53 |

+

"lstrip": false,

|

| 54 |

+

"normalized": false,

|

| 55 |

+

"rstrip": false,

|

| 56 |

+

"single_word": false,

|

| 57 |

+

"special": true

|

| 58 |

+

},

|

| 59 |

+

"151336": {

|

| 60 |

+

"content": "<|user|>",

|

| 61 |

+

"lstrip": false,

|

| 62 |

+

"normalized": false,

|

| 63 |

+

"rstrip": false,

|

| 64 |

+

"single_word": false,

|

| 65 |

+

"special": true

|

| 66 |

+

},

|

| 67 |

+

"151337": {

|

| 68 |

+

"content": "<|assistant|>",

|

| 69 |

+

"lstrip": false,

|

| 70 |

+

"normalized": false,

|

| 71 |

+

"rstrip": false,

|

| 72 |

+

"single_word": false,

|

| 73 |

+

"special": true

|

| 74 |

+

},

|

| 75 |

+

"151338": {

|

| 76 |

+

"content": "<|observation|>",

|

| 77 |

+

"lstrip": false,

|

| 78 |

+

"normalized": false,

|

| 79 |

+

"rstrip": false,

|

| 80 |

+

"single_word": false,

|

| 81 |

+

"special": true

|

| 82 |

+

},

|

| 83 |

+

"151339": {

|

| 84 |

+

"content": "<|begin_of_image|>",

|

| 85 |

+

"lstrip": false,

|

| 86 |

+

"normalized": false,

|

| 87 |

+

"rstrip": false,

|

| 88 |

+

"single_word": false,

|

| 89 |

+

"special": true

|

| 90 |

+

},

|

| 91 |

+

"151340": {

|

| 92 |

+

"content": "<|end_of_image|>",

|

| 93 |

+

"lstrip": false,

|

| 94 |

+

"normalized": false,

|

| 95 |

+

"rstrip": false,

|

| 96 |

+

"single_word": false,

|

| 97 |

+

"special": true

|

| 98 |

+

},

|

| 99 |

+

"151341": {

|

| 100 |

+

"content": "<|begin_of_video|>",

|

| 101 |

+

"lstrip": false,

|

| 102 |

+

"normalized": false,

|

| 103 |

+

"rstrip": false,

|

| 104 |

+

"single_word": false,

|

| 105 |

+

"special": true

|

| 106 |

+

},

|

| 107 |

+

"151342": {

|

| 108 |

+

"content": "<|end_of_video|>",

|

| 109 |

+

"lstrip": false,

|

| 110 |

+

"normalized": false,

|

| 111 |

+

"rstrip": false,

|